Deploying Magento2 – Future Prospects [4/4]

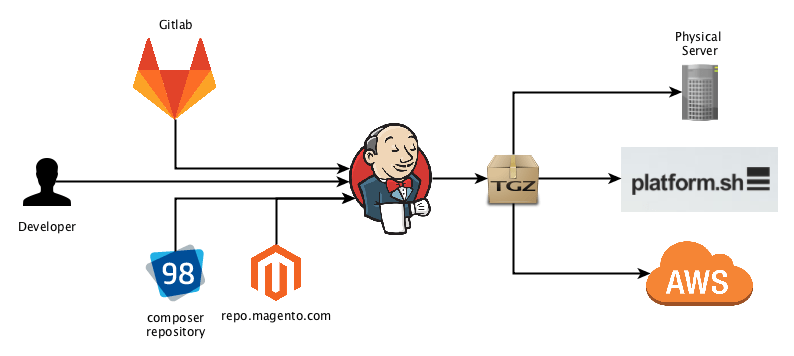

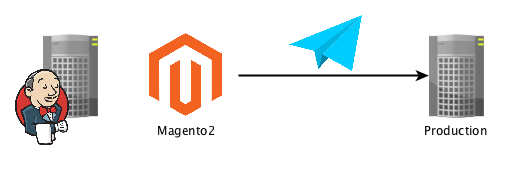

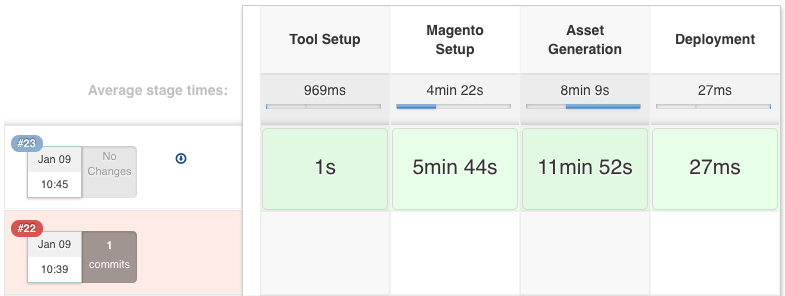

This post is part of series: History and Overview of Magento2 Deployment Jenkins Build-Pipeline Setup (building assets, controlling the deployment) Releasing to Production (delivering code and assets, managing releases) Future Prospect (cloud deployment, artifacts) Recap In the previous posts we dived into our Deployment Pipeline and the Release to the staging or production environments. You should check those posts first before reading this one. In this post we will share our thoughts on where we want to go with our…